Using Gemma 4 for Agentic Coding

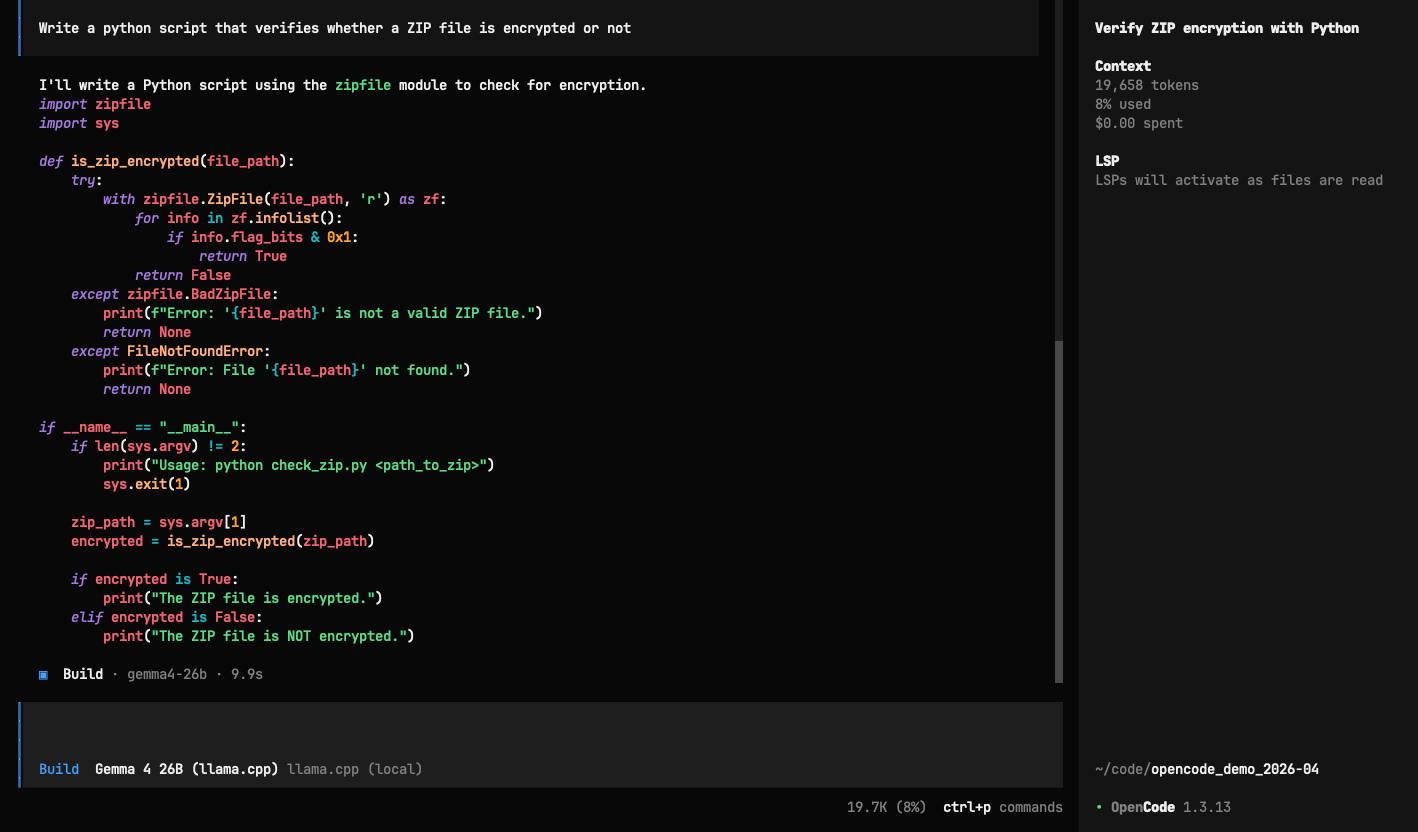

Google's Gemma 4 launched yesterday and I wanted to try it as a local coding agent. The setup: Gemma 4 26B running through Ollama on an M4 Pro MacBook with 48GB RAM, connected to OpenCode as the agent frontend. The idea is a free, private alternative to cloud coding agents; the model reads files, writes code, and runs commands on your machine.

The model scores 77% on LiveCodeBench, so I expected this to work reasonably well. Instead, it couldn't make a single tool call out of the box. It would plan what to do, say "I'll create the file now," and then produce an empty response. No file, no tool call, nothing.

I used Claude Code to help me trace the problem through the stack, and it turned out to be four separate infrastructure bugs, none of which are in the model itself.

Ollama's OpenAI endpoint ignores the think parameter

Gemma 4 has a thinking mode where it reasons internally before responding. In multi-turn tool conversations, the model spends all its output tokens on internal reasoning and never actually produces the tool call. The fix is passing think: false in the request, and this works on Ollama's native /api/chat endpoint. But the /v1/chat/completions endpoint, which is what OpenCode and most other tools use, simply ignores the parameter. Same server, same model, different behavior depending on which endpoint you hit.

Native API + think:false → tool_calls: ✓, tokens: 22

OpenAI API + think:false → tool_calls: ✗, tokens: 932 (all reasoning)

Streaming breaks multi-turn tool calls

Even after routing requests through Ollama's native API via a proxy, streaming responses in multi-turn conversations dropped tool calls. The same request with stream: false returned the tool call correctly, but with stream: true the response came back empty.

The Gemma 4 parser corrupts quoted arguments

There's an open PR on Ollama (#15255) that fixes a bug in the Gemma 4 parser where tool call arguments containing quotes get corrupted. Since practically every HTML snippet and many shell commands contains quotes, this breaks quite frequently.

Everyone else had the same problem

Looking at the HN launch thread, others reported the same issues: tool call errors, models ignoring prompt instructions, and Ollama being described as "more of a bottleneck than the model itself." The llama.cpp team merged template parser fixes for Gemma 4 on April 2 and 3, and LM Studio shipped fixes the same day. This is fairly typical launch-day infrastructure breakage, similar to what happened with Qwen 3.5.

The fix: llama.cpp without --jinja

After working through several Ollama workarounds (patching OpenCode from source, building a proxy to translate between Ollama's two API formats), the thing that actually solved it was switching to llama.cpp built from source.

A small breakthrough was when llama.cpp with --jinja mode threw a 500 error, but the error message contained the model's complete raw output: a full landing page with Tailwind CSS, navigation bar, hero section, and feature grid, all correctly wrapped in a write tool call. The model had been producing correct tool calls the entire time; however, the infrastructure was failing to parse them.

Without --jinja, llama.cpp parsed Gemma 4's native tool format correctly on every attempt. No proxy needed, full 256K context window, 22GB memory footprint on the M4 Pro.

llama-server \

--model gemma-4-26B-A4B-it-UD-Q4_K_M.gguf \

--port 11436 \

--ctx-size 262144 \

--n-gpu-layers 99 \

--flash-attn on

Practical takeaways

If you want to use Gemma 4 with tool calling right now, build llama.cpp from source, skip --jinja, and download the GGUF from Unsloth on HuggingFace. Ollama works fine for plain chat but don't rely on it for agentic tool use with this model yet.

For sampling parameters, use temperature: 1.0, top_p: 0.95, top_k: 64 as recommended by Google and confirmed by danielhanchen from Unsloth.

Both Ollama and llama.cpp are actively merging fixes, so this will likely improve within days. But if you're trying it today and the tool calls aren't working, it's almost certainly the serving layer, not the model.